Molly Russell was 14 years old when she chose to end her life in 2017. Since then, her family has been waging an arduous battle against those who, according to them, are the real culprits. Indeed, the coroner, Andrew Walker, concluded that this young British woman died from an act of self-harm caused by depression due to her interaction with harmful online content.

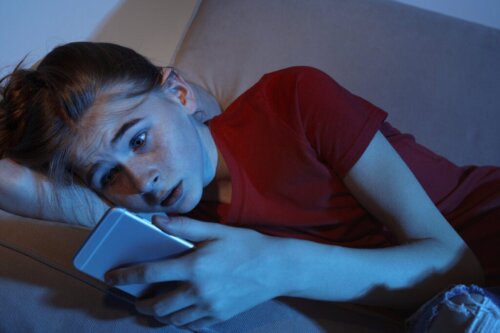

Molly had a board on Pinterest related to suicide. Likewise, her Twitter account (with more than 200,000 followers) published quotes related to the idea of ceasing to exist and of taking her own life. She made similar comments on Instagram. The algorithm of these social media platforms continuously offered her images of the same theme.

Molly did suffer from a depressive disorder. However, the digital universe, lacking filters and aimed only at permanently capturing users, reinforced and fed her idea of suicide, until, sadly, she fulfilled it. Five years have now passed since she died. Her devastated family continues to demand responsibility and change.

Despite the fact that content linked to suicide is prohibited on social media, users have created mechanisms to continue creating and receiving content on the subject. In Molly Russell’s case, they acted as the final triggers for her fatal decision.

The top executives of Pinterest took responsibility

Molly Hunter spent endless hours on her cell phone and computer looking at content related to suicide. Upon investigation, it was found that Pinterest used to send her emails with messages of the following type: “Ten boards about depression that you might like”. We even know that the last thing she did before she died was to save an image on Instagram with a message about depression.

Hearing these facts may well fill us with indignation. However, it’s important to make them visible along with the revelations from the British forensic experts. They confirmed that the content Molly received was excessively graphic and extremely harsh. They went on to state that large technology companies like Instagram or Pinterest normalize such content in an irrational way. In effect, they allow the algorithms to act in a completely unbalanced manner.

The coroner’s report blamed Molly’s tragic ending on Meta, the parent company of Instagram and Pinterest. It can’t be denied that the corporate culture of these profit-oriented companies is affecting the mental health of teens today. Moreover, in 2018, studies conducted by several universities highlighted the fact that social media increased both self-harm and suicidal tendencies in adolescents.

The issue is extremely serious. So much so that, for the first time, Meta had to appear at Molly’s inquest and face the fact that they’d encouraged a teenager to commit suicide.

Those responsible for Meta admitted that, in 2017, there was content online that should’ve been removed. However, at present and, according to them, it’s now improved a great deal in this particular aspect.

Pinterest admitted its platform wasn’t secure

Meta’s head of health and wellness, Elizabeth Lagone, and Pinterest’s head of community operations, Judson Hoffman, both had testify at Molly’s inquest. The first thing the senior executives did was to express their regret over what had happened to Molly Russell. In addition, they admitted that Pinterest wasn’t a secure platform.

- They also pointed out that both Pinterest and Instagram had violated the company’s security policies. In other words, images and information were published that broke the rules regarding the usage of these applications.

- They indicated that there was content on their social media sites that should be removed. However, they insisted that the company was firmly committed to making its use a healthy experience for everyone, particularly adolescents. They knew that both Pinterest and Instagram weren’t perfect, but suggested that, from 2017 to the present, many things had improved.

Has social media really improved since Molly Russell died?

Has Molly Russell’s death really served to change the policies of these companies as the little girl’s family wanted? Are these platforms much more secure now? Let’s find out.

Review teams overlook some images

Every day, a large number of new content is published, which sometimes escapes the filter of the review teams. While it’s true that both Instagram and Pinterest apply restrictions for all types of images with sexual content or that invite suicidal ideation,there are certain images that elude the algorithms.

Alternative keywords and hashtags

Today, there are groups and communities that seek out, reinforce, and promote problem behaviors. They often use code words and hashtags with double meanings. This makes it easy to find information related to suicide, self-harm, or behaviors linked to bulimia or anorexia, etc.

Molly Russell’s father is fighting for the authorities to develop an online safety bill as soon as possible.

Don’t look for information on suicide on social media

We all go through difficult times when life hurts. At these times, almost out of inertia or habit, we might look for information on the Internet and on social media. But we must understand that, when we’re going through bad times, what we find on our Instagram, Twitter, or TikTok accounts may, instead of helping us, make us feel worse.

If there’s something in your life that isn’t going well, if you feel alone, lost, and in great emotional pain, the first thing you should do is talk to someone you trust. Put social media to one side for a while and explain to them what you’re going through. You’ll soon realize that, in reality, you’re not alone. That there are people who love you and want to help you.

You need to realize that the pain you’re suffering now won’t last forever. There are resources within your reach that’ll help you to feel better and move on from this dark time. So please, if you’re thinking of looking for an ending, give yourself another chance and seek support. And remember that what you find in the feeds of social media won’t always help you.

The post The Death of Molly Russell: Social Media on Trial appeared first on Exploring your mind.

Comments